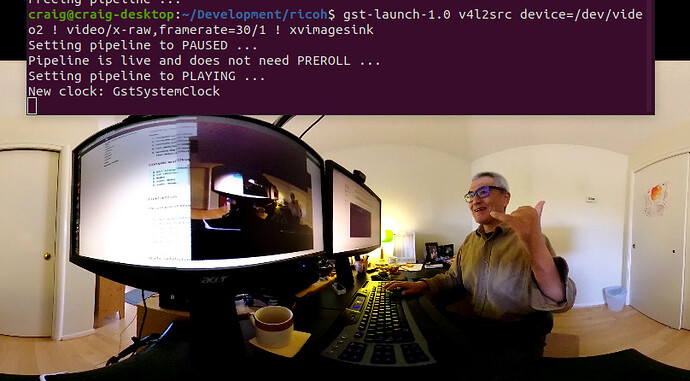

Look into ensuring that gstreamer is using hardware acceleration, not software acceleration.

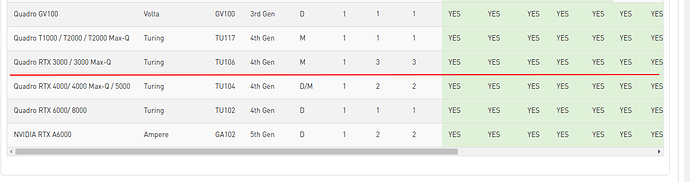

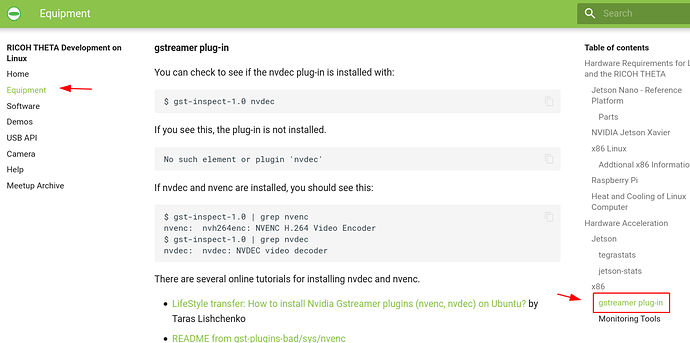

Do you have the nvdec plug-in installed which is part of the gstreamer-plugins-bad source (not binary) distribution.

What is the output of this:

gst-inspect-1.0 nvdec

These articles may help:

gst-plugins-bad/README at 1.14.5 · GStreamer/gst-plugins-bad · GitHub

How to install Nvidia Gstreamer plugins (nvenc, nvdec) on Ubuntu? - LifeStyleTransfer

Install NVDEC and NVENC as GStreamer plugins · GitHub

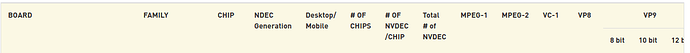

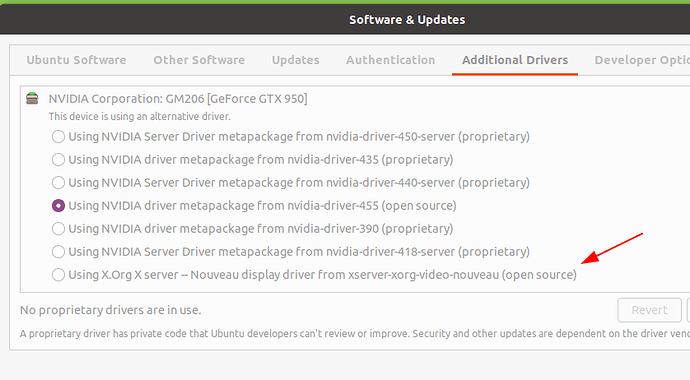

You have quite a nice graphics card that supports everything.

I just added additional information on using nvdec with gstreamer to the main linux streaming community doc available here.

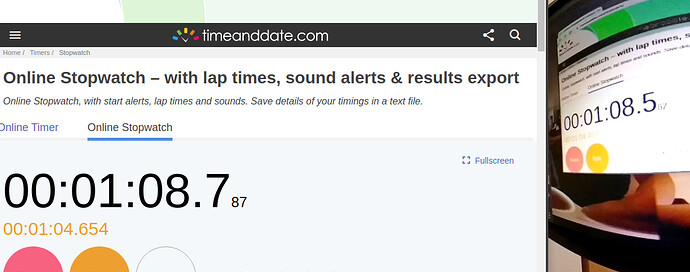

I also ran the compilation test on a fresh x86 machine.

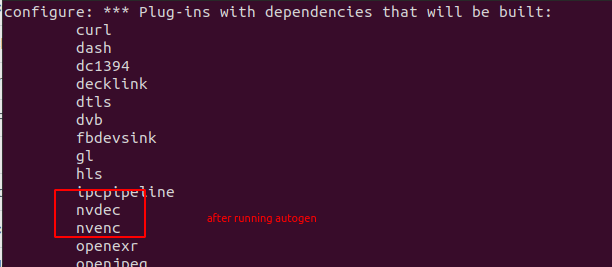

$ NVENCODE_CFLAGS="-I/home/craig/Development/gstreamer/gst-plugins-bad/sys/nvenc" ./autogen.sh --disable-gtk-doc --with-cuda-prefix="/usr/local/cuda"

The process is involved. I don’t think that the nvdec plug-in is needed on all systems. I’m not sure on which systems it is needed on.

Updated Oct 22 Afternoon

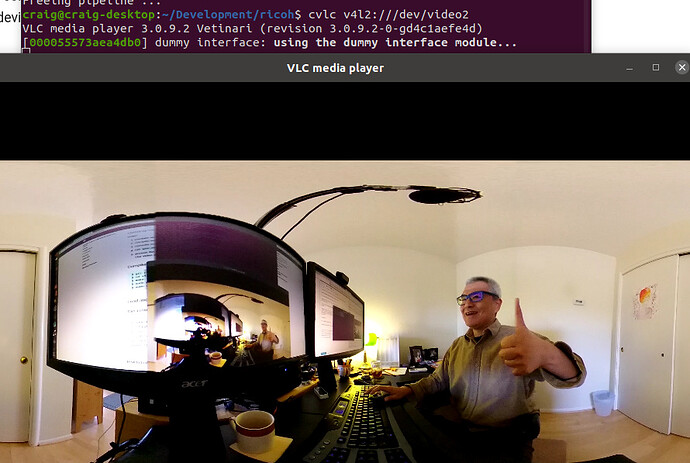

Do you have qos=false in the pipeline? It’s in the source code for gst_viewer.c.

This this first, if you haven’t already.

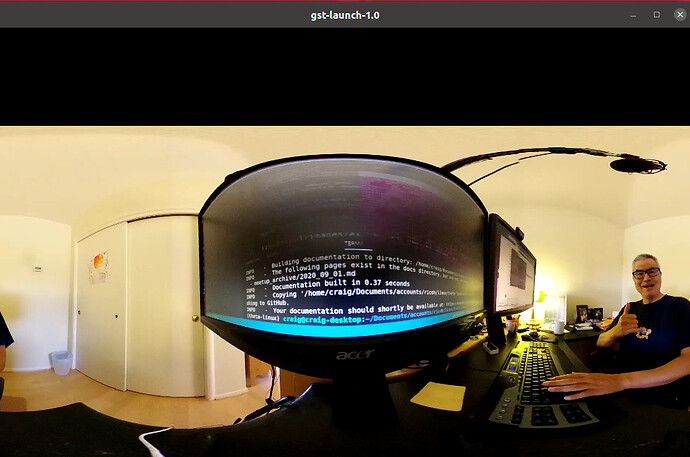

if (strcmp(cmd_name, "gst_loopback") == 0)

pipe_proc = "decodebin ! autovideoconvert ! "

"video/x-raw,format=I420 ! identity drop-allocation=true !"

"v4l2sink device=/dev/video0 qos=false sync=false";