@codetricity Thanks for this suggestion. I’ve actually been looking at this article recently!

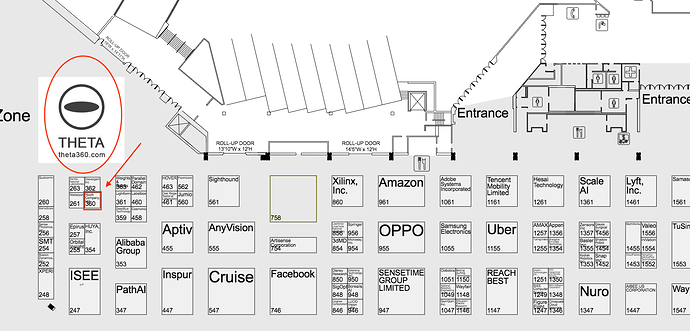

Is anyone headed to CVPR (June 18-20) in Long Beach, CA? I’ll be helping at the RICOH booth (#360) doing demos running some TensorFlow functionality inside a THETA V (4 different demos, Detect, Stylize, Speech, Classify) and the new HDR2EXR plug-in created by community member @Kasper.