I am a VR app developer. I have just completed the USB audio and video playback function and tested it with Theta X. The app has not been released yet.

great job. thanks for sharing.

Are you using Unity for this?

Are you going to add 360 navigation from the headset?

Do you plan to commercialize your project?

Tell us more about your plans and how you built the application.

There’s some ideas for inspiration from the community member below.

Hi craig. Thanks for the ideas.

This app is a VR video player and file manager. It can play local videos, NDI wireless videos, USB videos, and supports FOA SOA and HOA Ambisonic audio. I also plan to support DLNA and SMB protocols in future versions.

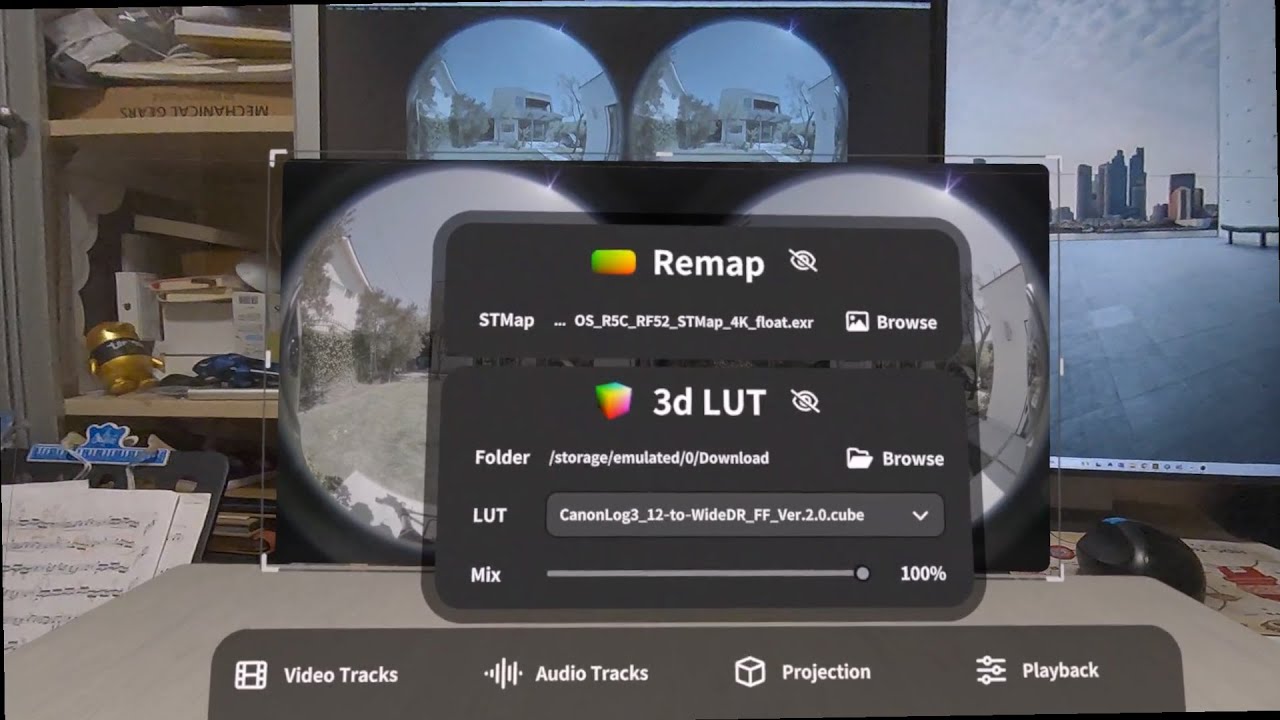

As shown in this video

This APP is mainly used for real-time preview during VR video shooting. It supports real-time fisheye video dewarp based on STMap, supports LUT and professional color grading functions, and supports many projection and 3D formats.

I hope that users can watch immersive videos or 3D videos shot by VR cameras without the help of a computer, just using a VR headset.

I also want to add USB OTG function so that users can use VR headset to directly view photos and videos where are saved in the camera without the need to use a computer to copy and tranfer files.

This app is developed based on Unity, but a lot of functions are done based on ffmpeg using c/c++ language.

Great job. Right now, how do the videos get from the camera to the headset. Are you using WiFi for the transfer?

After you edit the video in the headset, where does it go? Do you transfer it to a website for virtual video tours?

Very cool.

There are two ways to receive camera video, the wireless and wired way.

-

The camera outputs videos through HDMI or SGI, and sends NDI signals through an hardware converter. My app has a built-in NDI receiver and can directly play NDI audio and video.

-

The camera outputs videos through HDMI/SGI and connects to the VR headset through a USB capture card. My app supports UVC/UAC protocols.

Users can use my app to ensure that what they see is what they will get when shooting VR videos without shooting in blind.

Now it is indeed possible to edit videos in real time in the VR headset, but it can only be used for playback, not for transcoding and saving. VR headset are just Android devices and cannot replace PCs for transcoding.

This app is just a tool, it is only used for shooting preview and media playback. My app does not have functions such as uploading or virtual tours.

it’s a pretty nice tool for the preview of shooting 360 video.

Do you plan to sell or distribute it?

After the setup of the video, can the videographer start and stop the video from within the headset? Or, do they take off the headset and control the camera without a mobile app/laptop?

I am not a professional videographer, so I do not understand some of the terminology you are using. My questions may be a bit too simple.

I’m interested in the workflow for videographers.

BTW, our student intern at Oppkey just won an Meta Quest 3S with 128GB in an engineering contest. I’m not sure if they’ll even use the device. Can the Meta Quest 3S run your app?

Are you using the microphone on the THETA X? Or, do you have a spatial audio microphone rig as the audio input.

Seems like a cool and exciting app to really improve taking 360 video.

I will release my app on the Meta Quest App store. I plan to make it a free app with paid unlocking features.

For VR action cameras such as RICOH THETA and Insta360, I hope I can control the camera through the app as long as these manufacturers provide SDK or USB commands. However, for professional film cameras, they have their own way to control the camera. My app is only used as a preview tool.

After my app is released you can install it on your Meta Quest 3s. I would love to get your feedback on it.

In my video, I use the built-in microphone of THETA X, but my app also supports external audio recording devices.

This product is actually most suitable for VR180 3D shooting scenarios, such as those using Canon Dual fisheye lenses.

In fact, I originally developed this app just in order to optimize the workflow in this video.

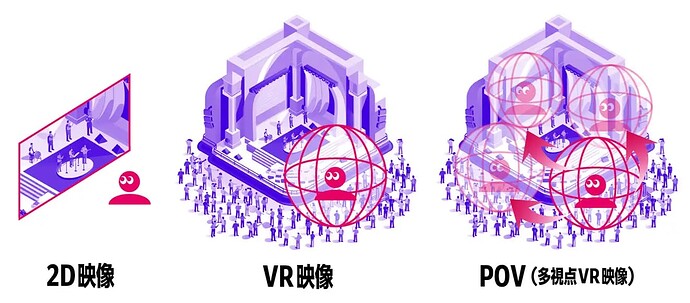

For the purpose of brainstorming new ideas, what do you think about the difference between video files and live streaming video. I’ve been following the work of Connex in Japan where they use 4 or more 360 cameras to stream multiple audience positions. The audience can then switch between different positions, basically walking around the performance stage.

It seems like your system would be excellent to adjust 4 or 5 360 cameras taking videos simultaneously, especially if there were a way to switch cameras and see the different scenes.

For live streaming videos, my app supports multi-view playback. Users select video streams through the UI panel. Each video stream corresponds to a camera position.

USB video/audio is not suitable in this use case. After all, we don’t want the user’s VR headset to be connected to a USB Hub and connected to five or six USB cables.

NDI technology can be used, so the number of video streams is unlimited. Just connect an NDI converter to each camera.

Local files and live streaming are suitable for different use cases. Local files mean that creators can do more post-processing of audio and video before delivery, while in live streaming scenarios such as concerts users are more focused on real-time interaction.

So as a video player, I need to support both workflows as much as possible.