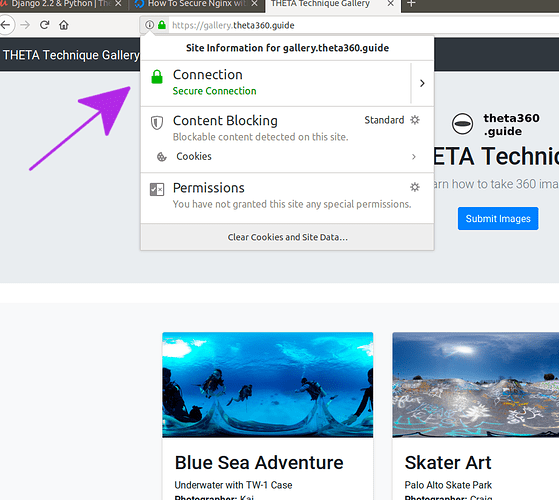

I recently built a 360 image technique gallery using Django to test web technologies to display RICOH THETA images and share 360 photography techniques.

The process was straightforward, thanks to A-Frame, which handles all the 360 navigation and display.

If you’re interested in working with @jcasman and I on the project, put a note down below.

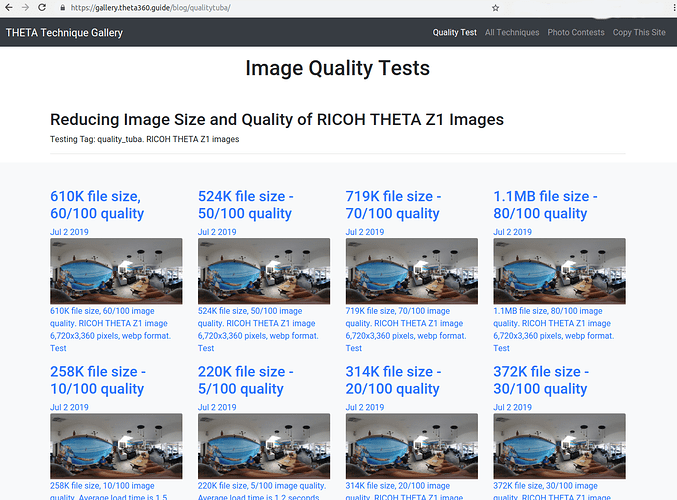

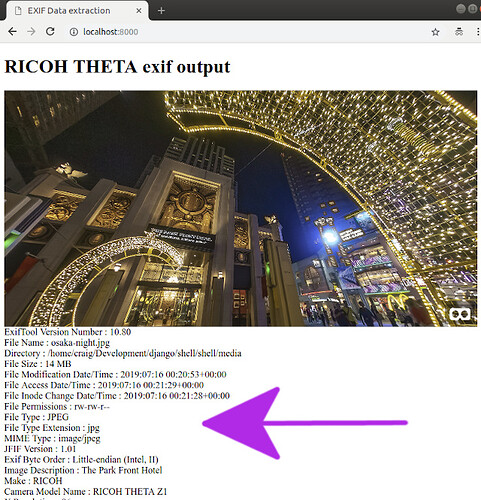

In addition to the primary Grid page of images, site shows different views of the image.

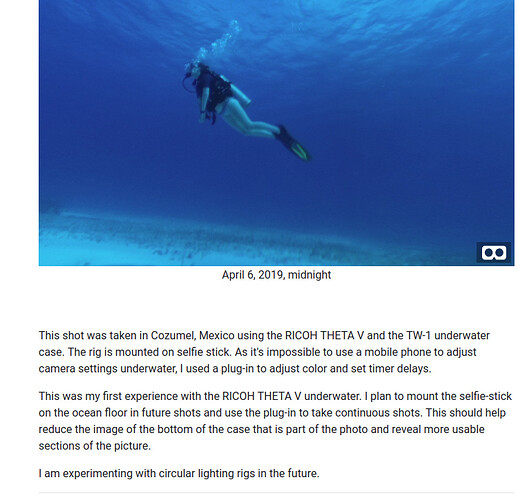

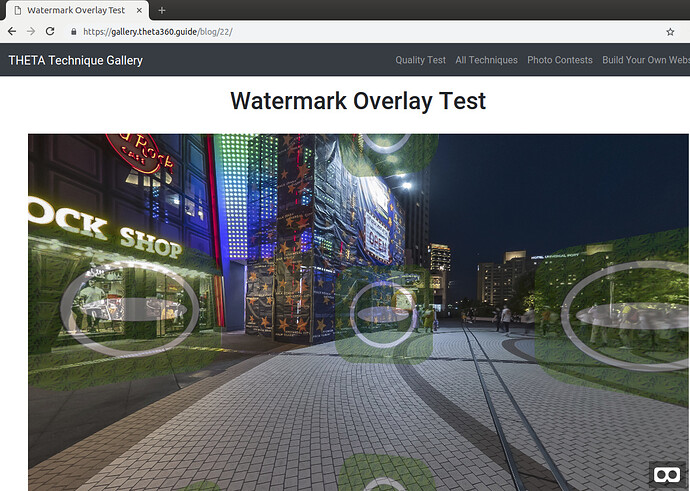

There’s a details view with with space for several paragraphs of text about the image. In the view below, I embedded the A-Frame scene into the web page. The image has navigation.

There’s also a full-screen VR headset mode with head motion and controller navigation.

If viewed in a web page, people can scroll through the images and see summary descriptions.

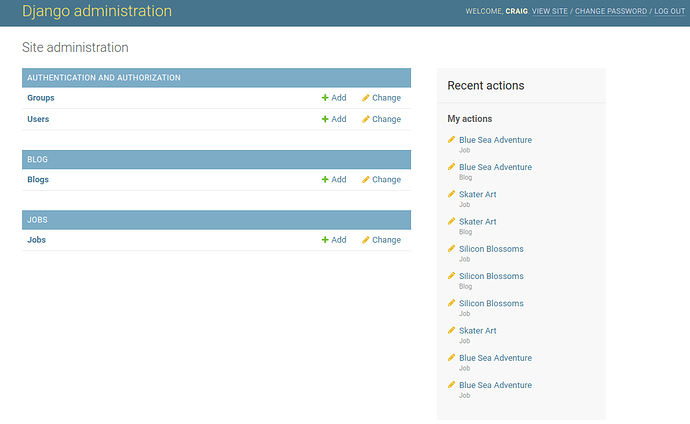

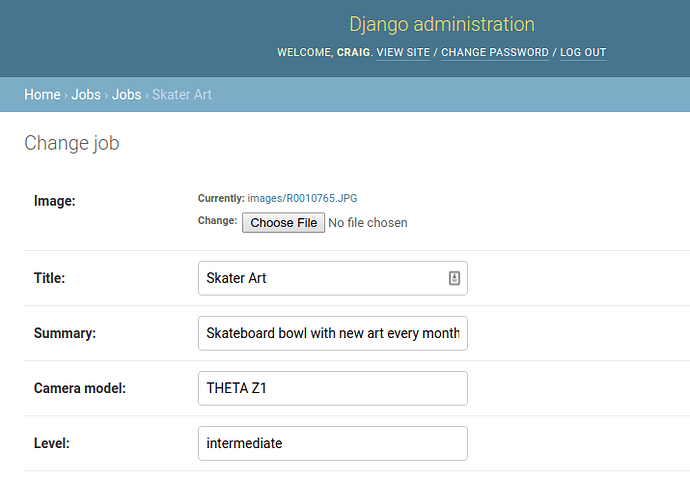

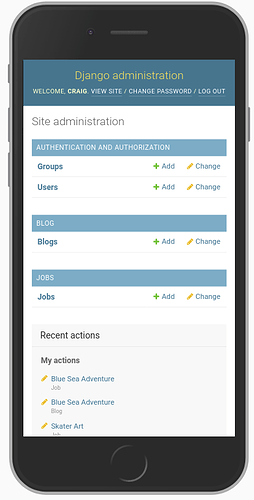

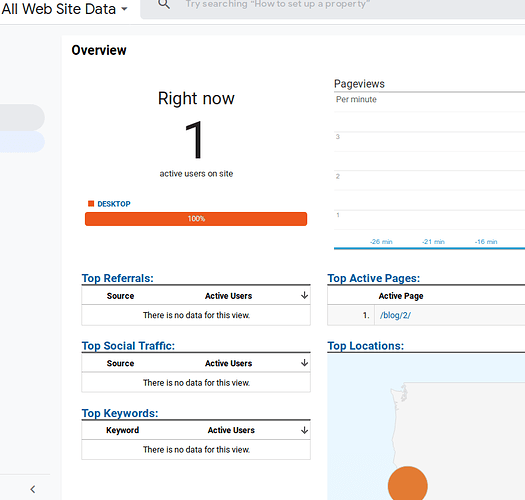

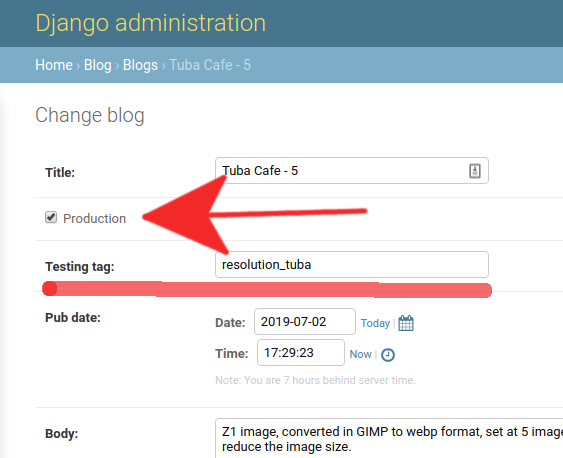

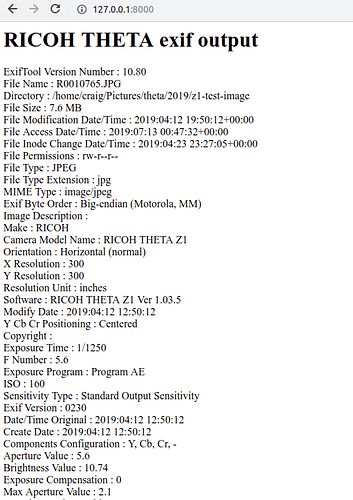

Management System

The site has user login with staff and admin roles.

New images are added to the gallery through a web interface.

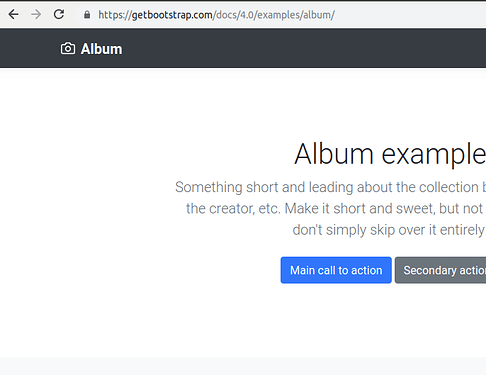

Laying out the Images

The album is based on copying the Bootstrap Album example.

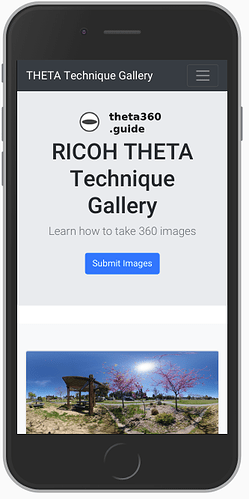

Mobile Resizing

Thanks to Bootstrap, the album will automatically resize and reduce the columns from 3 to 1 column on mobile.

A-Frame

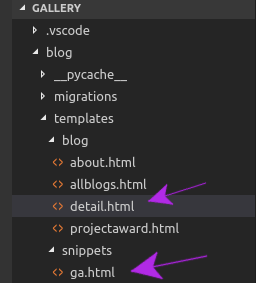

A-Frame is really easy to use. In the <head> of your html file, put this:

<script src="https://cdnjs.cloudflare.com/ajax/libs/aframe/0.7.1/aframe.min.js" integrity="sha256-SNDsmWBFQwJAjxLymQu5Fqh6rW4RKLJXUQboy3O0BUA=" crossorigin="anonymous"></script>

In the body, this is the section that calls up the image.

<div id="main-image">

<a-scene embedded>

<a-sky src="{{blog.image.url}}"></a-sky>

</a-scene>

</div>

The css styling

a-scene {

height: 600px;

width: 100%;

}

#main-image {

margin: auto;

}

Next Steps

We are looking for the following:

- photographers that are willing to share their images and techniques on the site in the future

- web developers interested in using, copying, or contributing to the code for this technique gallery

If You’re a Photographer

- Picture must be taken on THETA V, Z1, SC, S

- Must include information on how the picture was taken

- Self-promotion is OK. You can include information on your photography studio in the description.

The images in this article are not the images we will use in the site. These are placeholder images we are using to test the system. We would like to use your images.

If You’re a Web Developer

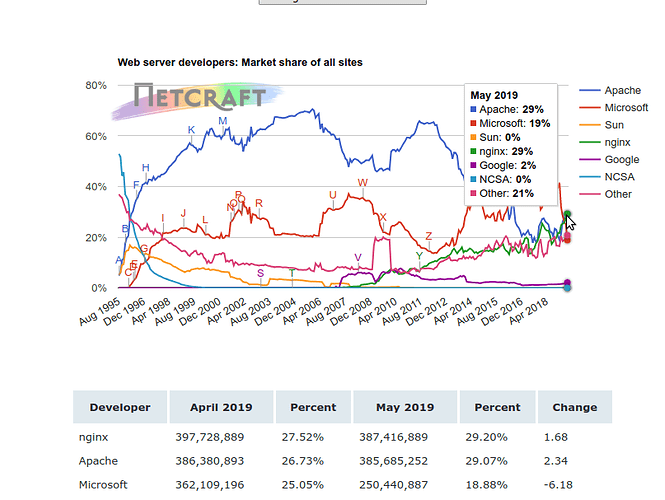

We are using these technologies.

- A-Frame

- Bootstrap 4 (HTML, CSS)

- Django 2.2

- Python 3.6

- PostgreSQL

Future Challenges

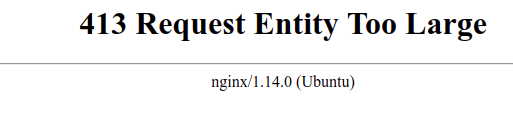

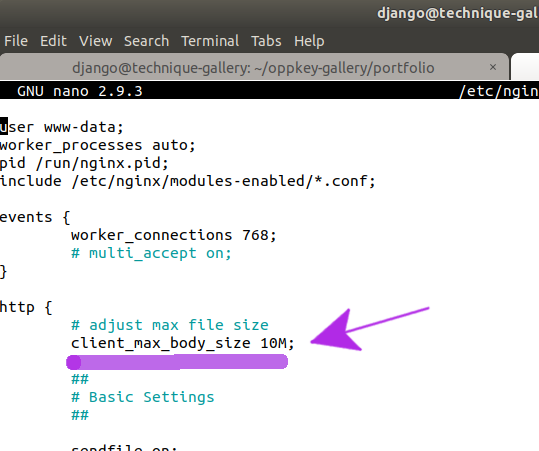

Images are currently stored at full resolution. We may run into problems when we try to move this to Digital Ocean for hosting.