I have Theta V. Currently it takes about 0.4 seconds to get live from camera to Unity. Thanks to the person who made Theta V Wifi Client Mode Streaming Demo for Unity. Is there any way to get that image faster? I have tried to figure out could I get un-stitched live preview, but I haven’t found a way to do it. Does anyone know is it possible and if it is, is there some tips where I could look for the answer?

Working project of latency at 200ms lag by @Jake_Kenin

https://ideas.theta360.guide/blog/award201905/

Other approaches

Such you read through Jake’s thread first as it discusses the latency issue and different techniques to get around it.

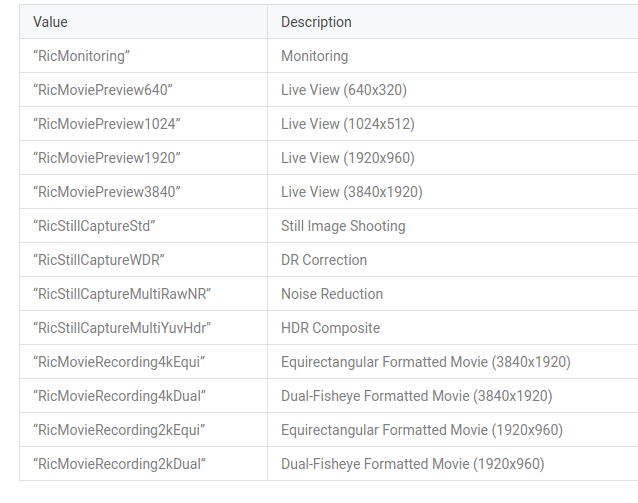

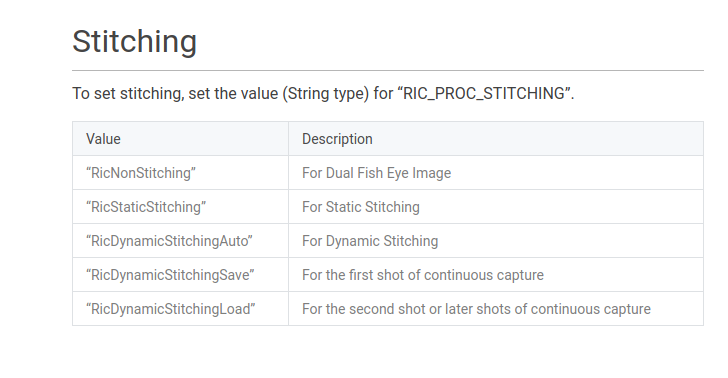

I have not tried this, but you can experiment with the Camera API to get direct access to the Android OS commands for the camera.

https://api.ricoh/docs/theta-plugin-reference/camera-api/

I do not think you can turn off stitching for Live View, but I have not tried.

You can use this GitHub repo as a base:

Note that even when the RICOH THETA is connected directly to Unity with a USB cable, I am getting latency of at least 0.4 seconds on Windows 10 with the USB driver supplied by RICOH with 4K video stream.

You may have lower latency with RTSP

Thanks for great answer. With @Jake_Kenin’s project I just get lovely results: lag was only 100ms to 200ms.

@kristira, thanks for the report back on the 100ms to 200ms latency lag. This is great.

If you’re able to share any of your project, that would be great. Even the concept of the project would be useful as this field of live streaming 360 video is under constant change and concepts give other people new ideas to think about. It’s an exciting time right now as we’re starting to see some projects move from prototype to production.

The basic concept of the project is to use a 360 camera on robot, send the video to the server, use OpenCV to recognize text (room numbers) and some object (humans and couple of different types of objects). The robot will warn if it finds out that human is too close. Human latency is about 300 ms, so if cameras latency is more than that, then it would be safer to a human would drive the robot, but when camera latency is less than that, then it is safer to computer to drive the robot.

For just autonomous robots there are a lot of different devices and sensors, but I would like to have a camera wich recognizes objects. It would also be possible that robot would have a portable computer which would do recognizing, but then there is a problem with power. When the camera sends a live review to a server, then the server can be as powerful as needed.

This project is just a pure research project, but we are testing some small equipment and try to figure out what kind of equipment it would need to operate heavy industry machines. This project results will be (mostly) public and I will publish the 360 camera part in this forum if there is something interesting that we do with it. The project timeline is quite long, this project last two years.

Strategy 1 - relay stream to high-powered server for processing

to relay the stream to another server, you can also reference the great project from @Hugues.

Strategy 2 - Process Inside the RICOH THETA Camera with Plug-in

Projects that use OpenCV or FastCV inside the camera:

Note that FastCV is a free library that uses the Qualcomm Snapdragon hardware acceleration. The RICOH THETA V and Z1 use the Snapdragon hardware and run Android OS inside the camera.

- Authydra

- FastCV Painting

- THETA Magic Filters (with GitHub Repo)

- FastCV on RICOH THETA article

Strategy 3 - Process with small board Linux computer attached to RICOH THETA with USB

The NVIDIA Jetson Xavier has a 512-core Volta GPU with Tensor Cores and an 8-core ARM, 16GB of memory with 4K H.264 hardware encoder/decoder. It may be enough to process some of your computer vision requirements.

@kristira I’m glad it worked for you! If you have any questions just hit me up.

Hello @kristira!

Can you please provide a reference to this demo or to the demo’s source code?

I need to see that such an implementation exists (low latency Wifi live streaming displaying in Unity).

Then i will proceed on buying my own Theta V.

Thanks in advance.

The amelia drone project is here:

If you want to get lower latency,

If you are using a Linux relay.