Will be in store soon.

Introduction

.

This project is about a Ricoh Theta V plugin which will make a painting-like image generated from captured image using the FastCV image processing SDK.

Note: My original plan was to make the Snap-to-Twitter plugin. However the project will take a longer time to participate in the contest. Hence I decided to change to this FastCV Painting project.

Getting Started

.

I spent some times working with the Theta V device. I installed some plugins and got to know the meaning of the various LED statuses and buttons. Here are some of the useful links to get started:

Steps for putting your THETA into developer mode

Plugin development community guide

.

FastCV Image Processing

.

FastCV is a Computer Vision library provided by Qualcomm. Operations involved in image processing are optimized for ARM processors and are particularly effective for Qualcomm’s Snapdragon processors. To use FastCV, it is necessary to program with C/C ++ using the Android NDK (Native Development Kit).

Follow these 4-parts series tutorials to setup the development environment and learn about the image processing library.

Part 1 - FastCV introduction and installation

Part 2 - How To Use Android NDK to Build FastCV for the RICOH THETA

Part 3 - How To Create a FastCV Application with the RICOH THETA Plug-in SDK

Part 4 - FastCV Image Processing

.

FastCV Painting Plug-in

.

FastCV Painting is a plugin for Ricoh Tetha V camera. While user is snapping a photo, it will generate a painting-like picture and store both the captured and generated pictures in files on device.

The initial version of the plugin will generate a negative-like and an edge-detection pictures. The later version will generate a painting-like image too. The captured picture will be saved as theta_DATETIME.JPG and generated pictures as painting_DATETIME.JPG , negative_DATETIME.JPG and edge_DATETIME.JPG .

.

Set Permissions

.

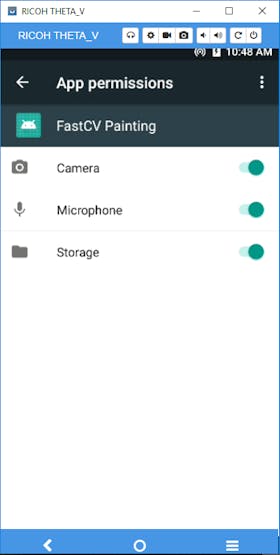

In order to use the plug-in, you must set the permissions for camera, microphone and storage. If the permissions are not set, even if you activate the plug-in, it drops immediately.

For plugins installed via the plugin store, permissions are automatically set as described in the manifest. During development, you must set the permissions manually.

When developing, it is easy to set permission using Vysor.

.

Set Permissions via Vysor

.

.

Usage

-

To run plug-in mode, press and hold the mode button.

-

Press the mode button several times until the still mode LED is turned on.

-

Press the shutter button and capture.

-

The captured and processed images are saved at DCIM directory.

.

Code Fragments

.

byte[] dst2 = mImageProcessor.process3(data);

if (dst2 != null) {

// save image

// String fileName = String.format("diff_%s.jpg", dateTime);

String fileName = String.format("painting_%s.jpg", dateTime);

String fileUrl = DCIM + "/" + fileName;

try (FileOutputStream fileOutputStream = new FileOutputStream(fileUrl)) {

fileOutputStream.write(dst2); // <- saving image

registerDatabase(fileName, fileUrl); // <- register the image path with database

Log.d("CameraFragment", "save: " + fileUrl);

} catch (IOException e) {

e.printStackTrace();

}

}

The above code calls process3 method (see next code fragment) which will generate the painting-like image.

.

public byte[] process3(byte[] data) {

Log.d(TAG, "ImageProcess");

/**

* Pre-process

*/

// Convert JPEG format to Bitmap class.

// To get byte[], copy the content of Bitmap to ByteBuffer.

Bitmap bmp = BitmapFactory.decodeByteArray(data, 0, data.length);

ByteBuffer byteBuffer = ByteBuffer.allocate(bmp.getByteCount());

bmp.copyPixelsToBuffer(byteBuffer);

int width = bmp.getWidth();

int height = bmp.getHeight();

/**

* Image Processing with FastCVnative code

*/

// get byte[] from byteBuffer and input to the function.

byte[] dstBmpArr = median2Filter(byteBuffer.array(), width, height);

if (dstBmpArr == null) {

Log.e(TAG, "Failed to medianFilter(). dstBmpArr is null.");

return null;

}

/**

* Post-process

*/

// copy byte[] to the content of Bitmap class.

Bitmap dstBmp = Bitmap.createBitmap(width, height, Bitmap.Config.ARGB_8888);

dstBmp.copyPixelsFromBuffer(ByteBuffer.wrap(dstBmpArr));

// compress Bitmap class to JPEG format.

ByteArrayOutputStream baos = new ByteArrayOutputStream();

dstBmp.compress(Bitmap.CompressFormat.JPEG,100, baos);

byte[] dst = baos.toByteArray();

return dst;

}

The above is the Java code process3 method calling the median2Filter C++ interface (see below).

JNIEXPORT jbyteArray

JNICALL Java_painting_theta360_fastcvsample_ImageProcessor_median2Filter

(

JNIEnv* env,

jobject obj,

jbyteArray img,

jint w,

jint h

)

{

DPRINTF("median2Filter()");

/**

* convert input data to jbyte object

*/

jbyte* jimgData = NULL;

jboolean isCopy = 0;

jimgData = env->GetByteArrayElements( img, &isCopy);

if (jimgData == NULL) {

DPRINTF("jimgData is NULL");

return NULL;

}

/**

* process

*/

// RGBA8888 -> RGB888

void* rgb888 = fcvMemAlloc( w*h*4, 16);

fcvColorRGBA8888ToRGB888u8((uint8_t*) jimgData, w, h, 0, (uint8_t*)rgb888, 0);

// Convert to Gray scale

void* gray = fcvMemAlloc( w*h, 16);

fcvColorRGB888ToGrayu8((uint8_t*)rgb888, w, h, 0, (uint8_t*)gray, 0);

// Filter Median

void* median = fcvMemAlloc(w*h, 16);

fcvFilterGaussian11x11u8((uint8_t*)gray,w,h,(uint8_t*)median,0);

// Reverse the image color

void* bitwise_not = fcvMemAlloc( w*h, 16);

fcvBitwiseNotu8((uint8_t*)median, w, h, 0, (uint8_t*)bitwise_not, 0);

// Gray scale -> RGBA8888

void* dst_rgba8888 = fcvMemAlloc( w*h*4, 16);

colorGrayToRGBA8888((uint8_t*)bitwise_not, w, h, (uint8_t*)dst_rgba8888); /// negative photo

void* dst2_rgba8888 = fcvMemAlloc( w*h*4, 16);

fcvBitwiseXoru8((uint8_t*)dst_rgba8888, w*4, h, w*4, (uint8_t*)jimgData, w*4, (uint8_t*)dst2_rgba8888, w*4);

/**

* copy to destination jbyte object

*/

jbyteArray dst = env->NewByteArray(w*h*4);

if (dst == NULL){

DPRINTF("dst is NULL");

// release

fcvMemFree(dst_rgba8888);

fcvMemFree(dst2_rgba8888);

fcvMemFree(bitwise_not);

fcvMemFree(median);

fcvMemFree(gray);

fcvMemFree(rgb888);

env->ReleaseByteArrayElements(img, jimgData, 0);

return NULL;

}

env->SetByteArrayRegion(dst,0,w*h*4,(jbyte*)dst2_rgba8888);

DPRINTF("copy");

// release

fcvMemFree(dst_rgba8888);

fcvMemFree(dst2_rgba8888);

fcvMemFree(bitwise_not);

fcvMemFree(median);

fcvMemFree(gray);

fcvMemFree(rgb888);

env->ReleaseByteArrayElements(img, jimgData, 0);

DPRINTF("processImage end");

return dst;

}

.

Photos and Paintings

.

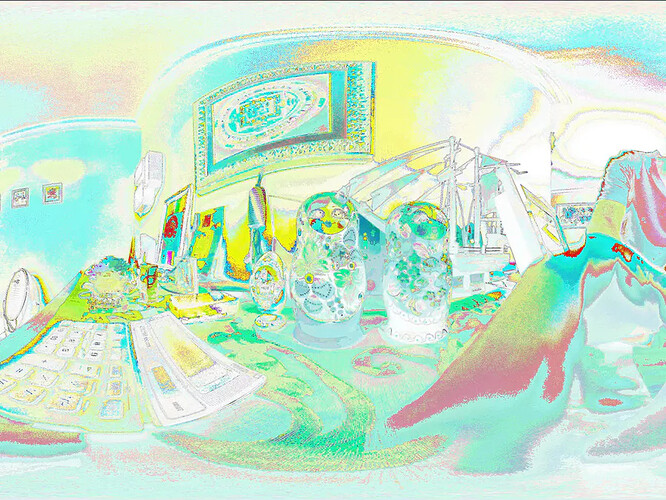

Painting-like image.

.

Negative-like image.

.

Edge-detection image.

.

Captured image.

.

Summary

.

This initial version is using a simple bit-wise manipulation of the captured image. Future improvements include using more advanced image processing techniques to generate more realistic images like watercolor and cartoon styles of images.

Code

ImageProcessor.java

package painting.theta360.fastcvsample;

import android.graphics.Bitmap;

import android.graphics.BitmapFactory;

import android.util.Log;

import java.io.ByteArrayOutputStream;

import java.nio.ByteBuffer;

public class ImageProcessor {

private static final String TAG = "FastCVSample";

static {

// Load JNI Library

System.loadLibrary("fastcvsample");

}

public void init() {

// Initialize FastCV

Log.d(TAG, "Initialize FastCV");

initFastCV();

}

public void cleanup() {

// Cleanup FastCV

Log.d(TAG, "Cleanup FastCV");

cleanupFastCV();

}

public byte[] process(byte[] data) {

Log.d(TAG, "ImageProcess");

/**

* Pre-process

*/

// Convert JPEG format to Bitmap class.

// To get byte[], copy the content of Bitmap to ByteBuffer.

Bitmap bmp = BitmapFactory.decodeByteArray(data, 0, data.length);

ByteBuffer byteBuffer = ByteBuffer.allocate(bmp.getByteCount());

bmp.copyPixelsToBuffer(byteBuffer);

int width = bmp.getWidth();

int height = bmp.getHeight();

/**

* Image Processing with FastCVnative code

*/

// get byte[] from byteBuffer and input to the function.

byte[] dstBmpArr = cannyFilter(byteBuffer.array(), width, height);

if (dstBmpArr == null) {

Log.e(TAG, "Failed to cannyFilter(). dstBmpArr is null.");

return null;

}

/**

* Post-process

*/

// copy byte[] to the content of Bitmap class.

Bitmap dstBmp = Bitmap.createBitmap(width, height, Bitmap.Config.ARGB_8888);

dstBmp.copyPixelsFromBuffer(ByteBuffer.wrap(dstBmpArr));

// compress Bitmap class to JPEG format.

ByteArrayOutputStream baos = new ByteArrayOutputStream();

dstBmp.compress(Bitmap.CompressFormat.JPEG,100, baos);

byte[] dst = baos.toByteArray();

return dst;

}

public byte[] process2(byte[] data1, byte[] data2) {

Log.d(TAG, "ImageProcess");

/**

* Pre-process

*/

// Convert JPEG format to Bitmap class.

// To get byte[], copy the content of Bitmap to ByteBuffer.

Bitmap bmp1 = BitmapFactory.decodeByteArray(data1, 0, data1.length);

ByteBuffer byteBuffer1 = ByteBuffer.allocate(bmp1.getByteCount());

bmp1.copyPixelsToBuffer(byteBuffer1);

int width1 = bmp1.getWidth();

int height1 = bmp1.getHeight();

Bitmap bmp2 = BitmapFactory.decodeByteArray(data2, 0, data2.length);

ByteBuffer byteBuffer2 = ByteBuffer.allocate(bmp2.getByteCount());

bmp2.copyPixelsToBuffer(byteBuffer2);

int width2 = bmp2.getWidth();

int height2 = bmp2.getHeight();

// width1 shoud be equal to width2, the like for height

/**

* Image Processing with FastCVnative code

*/

// get byte[] from byteBuffer and input to the function.

byte[] dstBmpArr = imageDiff(byteBuffer1.array(), byteBuffer2.array(), width1, height1);

if (dstBmpArr == null) {

Log.e(TAG, "Failed to imageDiff(). dstBmpArr is null.");

return null;

}

/**

* Post-process

*/

// copy byte[] to the content of Bitmap class.

Bitmap dstBmp = Bitmap.createBitmap(width1, height1, Bitmap.Config.ARGB_8888);

dstBmp.copyPixelsFromBuffer(ByteBuffer.wrap(dstBmpArr));

// compress Bitmap class to JPEG format.

ByteArrayOutputStream baos = new ByteArrayOutputStream();

dstBmp.compress(Bitmap.CompressFormat.JPEG,100, baos);

byte[] dst = baos.toByteArray();

return dst;

}

public byte[] process3(byte[] data) {

Log.d(TAG, "ImageProcess");

/**

* Pre-process

*/

// Convert JPEG format to Bitmap class.

// To get byte[], copy the content of Bitmap to ByteBuffer.

Bitmap bmp = BitmapFactory.decodeByteArray(data, 0, data.length);

ByteBuffer byteBuffer = ByteBuffer.allocate(bmp.getByteCount());

bmp.copyPixelsToBuffer(byteBuffer);

int width = bmp.getWidth();

int height = bmp.getHeight();

/**

* Image Processing with FastCVnative code

*/

// get byte[] from byteBuffer and input to the function.

byte[] dstBmpArr = median2Filter(byteBuffer.array(), width, height);

// byte[] dstBmpArr = medianFilter(byteBuffer.array(), width, height);

if (dstBmpArr == null) {

Log.e(TAG, "Failed to medianFilter(). dstBmpArr is null.");

return null;

}

/**

* Post-process

*/

// copy byte[] to the content of Bitmap class.

Bitmap dstBmp = Bitmap.createBitmap(width, height, Bitmap.Config.ARGB_8888);

dstBmp.copyPixelsFromBuffer(ByteBuffer.wrap(dstBmpArr));

// compress Bitmap class to JPEG format.

ByteArrayOutputStream baos = new ByteArrayOutputStream();

dstBmp.compress(Bitmap.CompressFormat.JPEG,100, baos);

byte[] dst = baos.toByteArray();

return dst;

}

public byte[] process4(byte[] data) {

Log.d(TAG, "ImageProcess");

/**

* Pre-process

*/

// Convert JPEG format to Bitmap class.

// To get byte[], copy the content of Bitmap to ByteBuffer.

Bitmap bmp = BitmapFactory.decodeByteArray(data, 0, data.length);

ByteBuffer byteBuffer = ByteBuffer.allocate(bmp.getByteCount());

bmp.copyPixelsToBuffer(byteBuffer);

int width = bmp.getWidth();

int height = bmp.getHeight();

/**

* Image Processing with FastCVnative code

*/

// get byte[] from byteBuffer and input to the function.

// byte[] dstBmpArr = median2Filter(byteBuffer.array(), width, height);

byte[] dstBmpArr = medianFilter(byteBuffer.array(), width, height);

if (dstBmpArr == null) {

Log.e(TAG, "Failed to medianFilter(). dstBmpArr is null.");

return null;

}

/**

* Post-process

*/

// copy byte[] to the content of Bitmap class.

Bitmap dstBmp = Bitmap.createBitmap(width, height, Bitmap.Config.ARGB_8888);

dstBmp.copyPixelsFromBuffer(ByteBuffer.wrap(dstBmpArr));

// compress Bitmap class to JPEG format.

ByteArrayOutputStream baos = new ByteArrayOutputStream();

dstBmp.compress(Bitmap.CompressFormat.JPEG,100, baos);

byte[] dst = baos.toByteArray();

return dst;

}

// Native Functions

private native void initFastCV();

private native void cleanupFastCV();

private native byte[] cannyFilter(byte[] data, int width, int height);

private native byte[] imageDiff(byte[] data1, byte[] data2, int width, int height);

private native byte[] medianFilter(byte[] data, int width, int height);

private native byte[] median2Filter(byte[] data, int width, int height);

}

FastCVSample.cpp

// FastCVSample.cpp

//==============================================================================

// Include Files

//==============================================================================

#include <stdlib.h>

#include <android/log.h>

#include <fastcv/fastcv.h>

#include "FastCVSample.h"

//==============================================================================

// Declarations

//==============================================================================

#define LOG_TAG "FastCVSample[cpp]"

#define DPRINTF(...) __android_log_print(ANDROID_LOG_DEBUG,LOG_TAG,__VA_ARGS__)

//==============================================================================

// Function Definitions

//==============================================================================

//------------------------------------------------------------------------------

//------------------------------------------------------------------------------

void colorGrayToRGBA8888(const uint8_t* src, unsigned int width, unsigned int height, uint8_t* dst){

if (src == NULL) {

DPRINTF("colorGrayToRGBA8888() src is NULL.");

return;

}

if (dst == NULL) {

DPRINTF("colorGrayToRGBA8888() dst is NULL.");

return;

}

// Gray scale -> RGBA8888

// copy the same value to R, G and B channels.

for (unsigned int i = 0; i < width*height; i++) {

dst[4*i] = src[i];

dst[4*i+1] = src[i];

dst[4*i+2] = src[i];

dst[4*i+3] = 0xFF; // Alpha channel

}

}

//------------------------------------------------------------------------------

//------------------------------------------------------------------------------

JNIEXPORT void JNICALL Java_painting_theta360_fastcvsample_ImageProcessor_initFastCV

(

JNIEnv* env,

jobject obj

)

{

char sVersion[32];

fcvSetOperationMode( (fcvOperationMode) FASTCV_OP_PERFORMANCE );

fcvGetVersion(sVersion, 32);

DPRINTF( "Using FastCV version %s \n", sVersion );

return;

}

//---------------------------------------------------------------------------

//---------------------------------------------------------------------------

JNIEXPORT void JNICALL Java_painting_theta360_fastcvsample_ImageProcessor_cleanupFastCV

(

JNIEnv * env,

jobject obj

)

{

fcvCleanUp();

}

//------------------------------------------------------------------------------

//------------------------------------------------------------------------------

JNIEXPORT jbyteArray

JNICALL Java_painting_theta360_fastcvsample_ImageProcessor_cannyFilter

(

JNIEnv* env,

jobject obj,

jbyteArray img,

jint w,

jint h

)

{

DPRINTF("cannyFilter()");

/**

* convert input data to jbyte object

*/

jbyte* jimgData = NULL;

jboolean isCopy = 0;

jimgData = env->GetByteArrayElements( img, &isCopy);

if (jimgData == NULL) {

DPRINTF("jimgData is NULL");

return NULL;

}

/**

* process

*/

// RGBA8888 -> RGB888

void* rgb888 = fcvMemAlloc( w*h*4, 16);

fcvColorRGBA8888ToRGB888u8((uint8_t*) jimgData, w, h, 0, (uint8_t*)rgb888, 0);

// Convert to Gray scale

void* gray = fcvMemAlloc( w*h, 16);

fcvColorRGB888ToGrayu8((uint8_t*)rgb888, w, h, 0, (uint8_t*)gray, 0);

// Canny filter

void* canny = fcvMemAlloc(w*h, 16);

// The value of lowThresh and highThresh are set appropriately.

fcvFilterCanny3x3u8((uint8_t*)gray,w,h,(uint8_t*)canny, 10, 30);

// fcvFilterSobel3x3u8((uint8_t*)rgb888,w,h,(uint8_t*)canny);

// Reverse the image color

void* bitwise_not = fcvMemAlloc( w*h, 16);

fcvBitwiseNotu8((uint8_t*)canny, w, h, 0, (uint8_t*)bitwise_not, 0);

// ///

// void* median = fcvMemAlloc(w*h, 16);

// fcvFilterMedian3x3u8((uint8_t*)bitwise_not,w,h,(uint8_t*)median);

//

// // Gray scale -> RGBA8888

// void* dst_rgba8888 = fcvMemAlloc( w*h*4, 16);

// colorGrayToRGBA8888((uint8_t*)median, w, h, (uint8_t*)dst_rgba8888);

//

// ///

// Gray scale -> RGBA8888

void* dst_rgba8888 = fcvMemAlloc( w*h*4, 16);

colorGrayToRGBA8888((uint8_t*)bitwise_not, w, h, (uint8_t*)dst_rgba8888);

/**

* copy to destination jbyte object

*/

jbyteArray dst = env->NewByteArray(w*h*4);

if (dst == NULL){

DPRINTF("dst is NULL");

// release

fcvMemFree(dst_rgba8888);

// fcvMemFree(median); //

fcvMemFree(bitwise_not);

fcvMemFree(canny);

fcvMemFree(gray);

fcvMemFree(rgb888);

env->ReleaseByteArrayElements(img, jimgData, 0);

return NULL;

}

env->SetByteArrayRegion(dst,0,w*h*4,(jbyte*)dst_rgba8888);

DPRINTF("copy");

// release

fcvMemFree(dst_rgba8888);

// fcvMemFree(median); //

fcvMemFree(bitwise_not);

fcvMemFree(canny);

fcvMemFree(gray);

fcvMemFree(rgb888);

env->ReleaseByteArrayElements(img,jimgData,0);

DPRINTF("processImage end");

return dst;

}

//------------------------------------------------------------------------------

//------------------------------------------------------------------------------

JNIEXPORT jbyteArray

JNICALL Java_painting_theta360_fastcvsample_ImageProcessor_imageDiff

(

JNIEnv* env,

jobject obj,

jbyteArray img1,

jbyteArray img2,

jint w,

jint h

)

{

DPRINTF("imageDiff()");

/**

* convert input data to jbyte object

*/

jbyte* jimgData1 = NULL;

jboolean isCopy = 0;

jimgData1 = env->GetByteArrayElements( img1, &isCopy);

if (jimgData1 == NULL) {

DPRINTF("jimgData1 is NULL");

return NULL;

}

jbyte* jimgData2 = NULL;

jimgData2 = env->GetByteArrayElements( img2, &isCopy);

if (jimgData2 == NULL) {

DPRINTF("jimgData2 is NULL");

return NULL;

}

/**

* process

*/

// RGBA8888 -> RGB888

void* rgb888_1 = fcvMemAlloc( w*h*4, 16);

fcvColorRGBA8888ToRGB888u8((uint8_t*) jimgData1, w, h, 0, (uint8_t*)rgb888_1, 0);

// RGBA8888 -> RGB888

void* rgb888_2 = fcvMemAlloc( w*h*4, 16);

fcvColorRGBA8888ToRGB888u8((uint8_t*) jimgData2, w, h, 0, (uint8_t*)rgb888_2, 0);

// image diff

void* diff = fcvMemAlloc(w*h*4, 16);

fcvImageDiffu8((uint8_t*)rgb888_1, (uint8_t*)rgb888_2, w, h, (uint8_t*)diff);

/**

* copy to destination jbyte object

*/

jbyteArray dst = env->NewByteArray(w*h*4);

if (dst == NULL){

DPRINTF("dst is NULL");

// release

fcvMemFree(diff);

fcvMemFree(rgb888_2);

fcvMemFree(rgb888_1);

env->ReleaseByteArrayElements(img1, jimgData1, 0);

env->ReleaseByteArrayElements(img2, jimgData2, 0);

return NULL;

}

env->SetByteArrayRegion(dst,0,w*h*4,(jbyte*)diff);

DPRINTF("copy");

// release

fcvMemFree(diff);

fcvMemFree(rgb888_2);

fcvMemFree(rgb888_1);

env->ReleaseByteArrayElements(img1, jimgData1, 0);

env->ReleaseByteArrayElements(img2, jimgData2, 0);

DPRINTF("processImage end");

return dst;

}

//------------------------------------------------------------------------------

//------------------------------------------------------------------------------

JNIEXPORT jbyteArray

JNICALL Java_painting_theta360_fastcvsample_ImageProcessor_medianFilter

(

JNIEnv* env,

jobject obj,

jbyteArray img,

jint w,

jint h

)

{

DPRINTF("medianFilter()");

/**

* convert input data to jbyte object

*/

jbyte* jimgData = NULL;

jboolean isCopy = 0;

jimgData = env->GetByteArrayElements( img, &isCopy);

if (jimgData == NULL) {

DPRINTF("jimgData is NULL");

return NULL;

}

/**

* process

*/

// RGBA8888 -> RGB888

void* rgb888 = fcvMemAlloc( w*h*4, 16);

fcvColorRGBA8888ToRGB888u8((uint8_t*) jimgData, w, h, 0, (uint8_t*)rgb888, 0);

// Convert to Gray scale

void* gray = fcvMemAlloc( w*h, 16);

fcvColorRGB888ToGrayu8((uint8_t*)rgb888, w, h, 0, (uint8_t*)gray, 0);

// Filter Median

void* median = fcvMemAlloc(w*h, 16);

fcvFilterMedian3x3u8((uint8_t*)gray,w,h,(uint8_t*)median);

// Reverse the image color

void* bitwise_not = fcvMemAlloc( w*h, 16);

fcvBitwiseNotu8((uint8_t*)median, w, h, 0, (uint8_t*)bitwise_not, 0);

// Gray scale -> RGBA8888

void* dst_rgba8888 = fcvMemAlloc( w*h*4, 16);

colorGrayToRGBA8888((uint8_t*)bitwise_not, w, h, (uint8_t*)dst_rgba8888);

/**

* copy to destination jbyte object

*/

jbyteArray dst = env->NewByteArray(w*h*4);

if (dst == NULL){

DPRINTF("dst is NULL");

// release

fcvMemFree(dst_rgba8888);

fcvMemFree(bitwise_not);

fcvMemFree(median);

fcvMemFree(gray);

fcvMemFree(rgb888);

env->ReleaseByteArrayElements(img, jimgData, 0);

return NULL;

}

env->SetByteArrayRegion(dst,0,w*h*4,(jbyte*)dst_rgba8888);

DPRINTF("copy");

// release

fcvMemFree(dst_rgba8888);

fcvMemFree(bitwise_not);

fcvMemFree(median);

fcvMemFree(gray);

fcvMemFree(rgb888);

env->ReleaseByteArrayElements(img, jimgData, 0);

DPRINTF("processImage end");

return dst;

}

//------------------------------------------------------------------------------

//------------------------------------------------------------------------------

JNIEXPORT jbyteArray

JNICALL Java_painting_theta360_fastcvsample_ImageProcessor_median2Filter

(

JNIEnv* env,

jobject obj,

jbyteArray img,

jint w,

jint h

)

{

DPRINTF("median2Filter()");

/**

* convert input data to jbyte object

*/

jbyte* jimgData = NULL;

jboolean isCopy = 0;

jimgData = env->GetByteArrayElements( img, &isCopy);

if (jimgData == NULL) {

DPRINTF("jimgData is NULL");

return NULL;

}

/**

* process

*/

// RGBA8888 -> RGB888

void* rgb888 = fcvMemAlloc( w*h*4, 16);

fcvColorRGBA8888ToRGB888u8((uint8_t*) jimgData, w, h, 0, (uint8_t*)rgb888, 0);

// Convert to Gray scale

void* gray = fcvMemAlloc( w*h, 16);

fcvColorRGB888ToGrayu8((uint8_t*)rgb888, w, h, 0, (uint8_t*)gray, 0);

// Filter Median

// void* median = fcvMemAlloc(w*h, 16);

// fcvFilterMedian3x3u8((uint8_t*)gray,w,h,(uint8_t*)median);

void* median = fcvMemAlloc(w*h, 16);

// fcvFilterMedian3x3u8((uint8_t*)gray,w,h,(uint8_t*)median);

fcvFilterGaussian11x11u8((uint8_t*)gray,w,h,(uint8_t*)median,0);

// Reverse the image color

void* bitwise_not = fcvMemAlloc( w*h, 16);

fcvBitwiseNotu8((uint8_t*)median, w, h, 0, (uint8_t*)bitwise_not, 0);

// Gray scale -> RGBA8888

void* dst_rgba8888 = fcvMemAlloc( w*h*4, 16);

colorGrayToRGBA8888((uint8_t*)bitwise_not, w, h, (uint8_t*)dst_rgba8888); /// negative photo

// colorGrayToRGBA8888((uint8_t*)median, w, h, (uint8_t*)dst_rgba8888); /// gray photo

// colorGrayToRGBA8888((uint8_t*)median, w, h, (uint8_t*)dst_rgba8888); /// gray photo

void* dst2_rgba8888 = fcvMemAlloc( w*h*4, 16);

fcvBitwiseXoru8((uint8_t*)dst_rgba8888, w*4, h, w*4, (uint8_t*)jimgData, w*4, (uint8_t*)dst2_rgba8888, w*4);

/**

* copy to destination jbyte object

*/

jbyteArray dst = env->NewByteArray(w*h*4);

if (dst == NULL){

DPRINTF("dst is NULL");

// release

fcvMemFree(dst_rgba8888);

fcvMemFree(dst2_rgba8888);

fcvMemFree(bitwise_not);

fcvMemFree(median);

fcvMemFree(gray);

fcvMemFree(rgb888);

env->ReleaseByteArrayElements(img, jimgData, 0);

return NULL;

}

// env->SetByteArrayRegion(dst,0,w*h*4,(jbyte*)dst_rgba8888);

env->SetByteArrayRegion(dst,0,w*h*4,(jbyte*)dst2_rgba8888);

DPRINTF("copy");

// release

fcvMemFree(dst_rgba8888);

fcvMemFree(dst2_rgba8888);

fcvMemFree(bitwise_not);

fcvMemFree(median);

fcvMemFree(gray);

fcvMemFree(rgb888);

env->ReleaseByteArrayElements(img, jimgData, 0);

DPRINTF("processImage end");

return dst;

}

CameraFragment.java

/**

* Copyright 2018 Ricoh Company, Ltd.

*

* Licensed under the Apache License, Version 2.0 (the "License");

* you may not use this file except in compliance with the License.

* You may obtain a copy of the License at

*

* http://www.apache.org/licenses/LICENSE-2.0

*

* Unless required by applicable law or agreed to in writing, software

* distributed under the License is distributed on an "AS IS" BASIS,

* WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

* See the License for the specific language governing permissions and

* limitations under the License.

*/

package painting.theta360.fastcvsample;

import android.content.ContentResolver;

import android.content.ContentValues;

import android.content.Context;

import android.hardware.Camera;

import android.media.AudioManager;

import android.media.CamcorderProfile;

import android.media.MediaRecorder;

import android.os.Bundle;

import android.os.Environment;

import android.os.FileObserver;

import android.provider.MediaStore;

import android.support.annotation.NonNull;

import android.support.v4.app.Fragment;

import android.util.Log;

import android.view.LayoutInflater;

import android.view.SurfaceHolder;

import android.view.SurfaceView;

import android.view.View;

import android.view.ViewGroup;

import java.io.File;

import java.io.FileOutputStream;

import java.io.IOException;

import java.text.SimpleDateFormat;

import java.util.Date;

/**

* CameraFragment

*/

public class CameraFragment extends Fragment {

public static final String DCIM = Environment.getExternalStoragePublicDirectory(

Environment.DIRECTORY_DCIM).getPath();

private SurfaceHolder mSurfaceHolder;

private Camera mCamera;

private int mCameraId;

private Camera.Parameters mParameters;

private Camera.CameraInfo mCameraInfo;

private CFCallback mCallback;

private AudioManager mAudioManager;//for video

private MediaRecorder mMediaRecorder;//for video

private boolean isSurface = false;

private boolean isCapturing = false;

private boolean isShutter = false;

private File instanceRecordMP4;

private File instanceRecordWAV;

private MediaRecorder.OnInfoListener onInfoListener = new MediaRecorder.OnInfoListener() {

@Override

public void onInfo(MediaRecorder mr, int what, int extra) {

}

};

private MediaRecorder.OnErrorListener onErrorListener = new MediaRecorder.OnErrorListener() {

@Override

public void onError(MediaRecorder mediaRecorder, int what, int extra) {

}

};

private Camera.ErrorCallback mErrorCallback = new Camera.ErrorCallback() {

@Override

public void onError(int error, Camera camera) {

}

};

private SurfaceHolder.Callback mSurfaceHolderCallback = new SurfaceHolder.Callback() {

@Override

public void surfaceCreated(SurfaceHolder surfaceHolder) {

isSurface = true;

open();

}

@Override

public void surfaceChanged(SurfaceHolder surfaceHolder, int format, int width, int height) {

setSurface(surfaceHolder);

}

@Override

public void surfaceDestroyed(SurfaceHolder surfaceHolder) {

isSurface = false;

close();

}

};

private Camera.ShutterCallback onShutterCallback = new Camera.ShutterCallback() {

@Override

public void onShutter() {

// ShutterCallback is called twice.

if (!isShutter) {

mCallback.onShutter();

isShutter = true;

}

}

};

private Camera.PictureCallback onJpegPictureCallback = new Camera.PictureCallback() {

@Override

public void onPictureTaken(byte[] data, Camera camera) {

mParameters.set("RIC_PROC_STITCHING", "RicStaticStitching");

mCamera.setParameters(mParameters);

mCamera.stopPreview();

// input the still image data, and the processed image is returned.

byte[] dst = mImageProcessor.process(data);

String dateTime = getDateTime();

if (dst != null) {

// save image

String fileName = String.format("edge_%s.jpg", dateTime);

String fileUrl = DCIM + "/" + fileName;

try (FileOutputStream fileOutputStream = new FileOutputStream(fileUrl)) {

fileOutputStream.write(dst); // <- saving image

registerDatabase(fileName, fileUrl); // <- register the image path with database

Log.d("CameraFragment", "save: " + fileUrl);

} catch (IOException e) {

e.printStackTrace();

}

fileName = String.format("theta_%s.jpg", dateTime);

fileUrl = DCIM + "/" + fileName;

// save captured image

try (FileOutputStream fileOutputStream = new FileOutputStream(fileUrl)) {

fileOutputStream.write(data); // <- saving image

registerDatabase(fileName, fileUrl); // <- register the image path with database

Log.d("CameraFragment", "save: " + fileUrl);

} catch (IOException e) {

e.printStackTrace();

}

}

// process image diff

// byte[] dst2 = mImageProcessor.process2(dst, data);

byte[] dst2 = mImageProcessor.process3(data);

if (dst2 != null) {

// save image

// String fileName = String.format("diff_%s.jpg", dateTime);

String fileName = String.format("painting_%s.jpg", dateTime);

String fileUrl = DCIM + "/" + fileName;

try (FileOutputStream fileOutputStream = new FileOutputStream(fileUrl)) {

fileOutputStream.write(dst2); // <- saving image

registerDatabase(fileName, fileUrl); // <- register the image path with database

Log.d("CameraFragment", "save: " + fileUrl);

} catch (IOException e) {

e.printStackTrace();

}

}

byte[] dst3 = mImageProcessor.process4(data);

if (dst3 != null) {

// save image

// String fileName = String.format("diff_%s.jpg", dateTime);

String fileName = String.format("negative_%s.jpg", dateTime);

String fileUrl = DCIM + "/" + fileName;

try (FileOutputStream fileOutputStream = new FileOutputStream(fileUrl)) {

fileOutputStream.write(dst3); // <- saving image

registerDatabase(fileName, fileUrl); // <- register the image path with database

Log.d("CameraFragment", "save: " + fileUrl);

} catch (IOException e) {

e.printStackTrace();

}

}

mCallback.onPictureTaken();

mCamera.startPreview();

isCapturing = false;

}

};

private FileObserver fileObserver = new FileObserver(DCIM) {

@Override

public void onEvent(int event, String path) {

switch (event) {

case FileObserver.OPEN:

Log.d("debug", "OPEN:" + path);

break;

case FileObserver.CLOSE_NOWRITE:

Log.d("debug", "CLOSE:" + path);

break;

case FileObserver.CREATE:

Log.d("debug", "CREATE:" + path);

break;

case FileObserver.DELETE:

Log.d("debug", "DELETE:" + path);

break;

case FileObserver.CLOSE_WRITE:

Log.d("debug", "CLOSE_WRITE:" + path);

break;

case FileObserver.MODIFY:

//Log.d("debug", "MODIFY:" + path);

break;

default:

Log.d("debug", "event:" + event + ", " + path);

break;

}

}

};

private ImageProcessor mImageProcessor;

public CameraFragment() {

}

@Override

public View onCreateView(LayoutInflater inflater, ViewGroup container,

Bundle savedInstanceState) {

return inflater.inflate(R.layout.fragment_main, container, false);

}

@Override

public void onViewCreated(final View view, Bundle savedInstanceState) {

SurfaceView surfaceView = (SurfaceView) view.findViewById(R.id.surfaceView);

mSurfaceHolder = surfaceView.getHolder();

mSurfaceHolder.addCallback(mSurfaceHolderCallback);

mAudioManager = (AudioManager) getContext()

.getSystemService(Context.AUDIO_SERVICE);//for video

}

@Override

public void onAttach(Context context) {

super.onAttach(context);

if (context instanceof CFCallback) {

mCallback = (CFCallback) context;

}

mImageProcessor = new ImageProcessor();

}

@Override

public void onStart() {

super.onStart();

// startWatching(); // for debug

mImageProcessor.init();

if (isSurface) {

open();

setSurface(mSurfaceHolder);

}

}

@Override

public void onStop() {

// stopWatching(); // for debug

close();

super.onStop();

mImageProcessor.cleanup();

}

@Override

public void onDetach() {

super.onDetach();

mCallback = null;

}

public void startWatching() {

fileObserver.startWatching();

}

public void stopWatching() {

fileObserver.stopWatching();

}

public void takePicture() {

if (!isCapturing) {

isCapturing = true;

isShutter = false;

mParameters.setPictureSize(5376, 2688);

mParameters.set("RIC_SHOOTING_MODE", "RicStillCaptureStd");

mParameters.set("RIC_EXPOSURE_MODE", "RicAutoExposureP");

mParameters.set("RIC_PROC_STITCHING", "RicDynamicStitchingAuto");

mParameters.set("recording-hint", "false");

mParameters.setJpegThumbnailSize(320, 160);

mCamera.setParameters(mParameters);

mCamera.takePicture(onShutterCallback, null, onJpegPictureCallback);

Log.d("debug", "mCamera.takePicture()");

}

}

public boolean isMediaRecorderNull() {

return mMediaRecorder == null;

}

public boolean takeVideo() {

boolean result = true;

if (mMediaRecorder == null) {

mMediaRecorder = new MediaRecorder();

mAudioManager.setParameters("RicUseBFormat=true");

mAudioManager.setParameters("RicMicSelect=RicMicSelectAuto");

mAudioManager

.setParameters("RicMicSurroundVolumeLevel=RicMicSurroundVolumeLevelNormal");

mParameters.set("RIC_PROC_STITCHING", "RicStaticStitching");

mParameters.set("RIC_SHOOTING_MODE", "RicMovieRecording4kEqui");

// for 4K video

CamcorderProfile camcorderProfile = CamcorderProfile.get(mCameraId, 10013);

mParameters.set("video-size", "3840x1920");

mParameters.set("recording-hint", "true");

mCamera.setParameters(mParameters);

mCamera.unlock();

mMediaRecorder.setCamera(mCamera);

mMediaRecorder.setVideoSource(MediaRecorder.VideoSource.CAMERA);

mMediaRecorder.setAudioSource(MediaRecorder.AudioSource.UNPROCESSED);

camcorderProfile.videoCodec = MediaRecorder.VideoEncoder.H264;

camcorderProfile.audioCodec = MediaRecorder.AudioEncoder.AAC;

camcorderProfile.audioChannels = 1;

mMediaRecorder.setProfile(camcorderProfile);

mMediaRecorder.setVideoEncodingBitRate(56000000); // 56 Mbps

mMediaRecorder.setVideoFrameRate(30); // 30 fps

mMediaRecorder.setMaxDuration(1500000); // max: 25 min

mMediaRecorder.setMaxFileSize(20401094656L); // max: 19 GB

String videoFile = String.format("%s/plugin_%s.mp4", DCIM, getDateTime());

String wavFile = String.format("%s/plugin_%s.wav", DCIM, getDateTime());

String videoWavFile = String.format("%s,%s", videoFile, wavFile);

mMediaRecorder.setOutputFile(videoWavFile);

mMediaRecorder.setPreviewDisplay(mSurfaceHolder.getSurface());

mMediaRecorder.setOnErrorListener(onErrorListener);

mMediaRecorder.setOnInfoListener(onInfoListener);

try {

mMediaRecorder.prepare();

mMediaRecorder.start();

Log.d("debug", "mMediaRecorder.start()");

instanceRecordMP4 = new File(videoFile);

instanceRecordWAV = new File(wavFile);

} catch (IOException | RuntimeException e) {

e.printStackTrace();

stopMediaRecorder();

result = false;

}

} else {

try {

mMediaRecorder.stop();

Log.d("debug", "mMediaRecorder.stop()");

} catch (RuntimeException e) {

// cancel recording

instanceRecordMP4.delete();

instanceRecordWAV.delete();

result = false;

} finally {

stopMediaRecorder();

}

}

return result;

}

public boolean isCapturing() {

return isCapturing;

}

private void open() {

if (mCamera == null) {

int numberOfCameras = Camera.getNumberOfCameras();

for (int i = 0; i < numberOfCameras; i++) {

Camera.CameraInfo info = new Camera.CameraInfo();

Camera.getCameraInfo(i, info);

if (info.facing == Camera.CameraInfo.CAMERA_FACING_BACK) {

mCameraInfo = info;

mCameraId = i;

}

mCamera = Camera.open(mCameraId);

}

mCamera.setErrorCallback(mErrorCallback);

mParameters = mCamera.getParameters();

mParameters.set("RIC_SHOOTING_MODE", "RicMonitoring");

mCamera.setParameters(mParameters);

}

}

private void close() {

stopMediaRecorder();

if (mCamera != null) {

mCamera.stopPreview();

mCamera.setPreviewCallback(null);

mCamera.setErrorCallback(null);

mCamera.release();

mCamera = null;

}

}

private void stopMediaRecorder() {

if (mMediaRecorder != null) {

try {

mMediaRecorder.stop();

} catch (RuntimeException e) {

e.printStackTrace();

}

mMediaRecorder.reset();

mMediaRecorder.release();

mMediaRecorder = null;

mCamera.lock();

}

}

private void setSurface(@NonNull SurfaceHolder surfaceHolder) {

if (mCamera != null) {

mCamera.stopPreview();

try {

mCamera.setPreviewDisplay(surfaceHolder);

mParameters.setPreviewSize(1920, 960);

mCamera.setParameters(mParameters);

} catch (IOException e) {

e.printStackTrace();

close();

}

mCamera.startPreview();

}

}

private String getDateTime() {

Date date = new Date(System.currentTimeMillis());

String format = "yyyyMMddHHmmss";

SimpleDateFormat sdf = new SimpleDateFormat(format);

String text = sdf.format(date);

return text;

}

private void registerDatabase(String fileName, String filePath){

ContentValues values = new ContentValues();

ContentResolver contentResolver = this.getContext().getContentResolver();

values.put(MediaStore.Images.Media.MIME_TYPE, "image/jpeg");

values.put(MediaStore.Images.Media.TITLE, fileName);

values.put("_data", filePath);

contentResolver.insert(MediaStore.Images.Media.EXTERNAL_CONTENT_URI, values);

}

public interface CFCallback {

void onShutter();

void onPictureTaken();

}

}