Community member Shun Yamashita of fulldepth is using a RPi4 to stream the THETA to a Windows PC. I updated the linux streaming community document with additional information. I’ll put the relevant pieces here for convenience.

Architecture

- Raspberry Pi is inside of a drone and connected to the Z1 with a USB cable. The Raspberry Pi OS is using the gstreamer sample code and driver from this site

- the RPi4 transmits the video stream from a drone to a Windows PC on a boat. I’m not sure what the connection is between the RPi4 and the Windows PC, whether there is a physical cable or some type of radio transmitter

- the RPi4 sends the video stream as UDP/RTP

Implementation

This is the modification to the pipeline in gst/gst_viewer.c

src.pipeline = gst_parse_launch(

" appsrc name=ap ! queue ! h264parse ! queue"

" ! rtph264pay ! udpsink host=192.168.0.15 port=9000",

NULL);

Equipment

The RPi4 has 4GB of RAM.

Update on Long-term Streaming at 4K

I have a long update continuous live streaming on community document available here.

To make the information easier to find for the casual reader, I will also put some key points of the update here.

Long-Term Streaming

With firmware 1.60.1 or newer, the Z1 can stream indefinitely. The battery will charge when streaming at 4K. To stream indefinitely, you need the proper equipment.

The USB port supplying charge to the RICOH THETA needs to supply approximately 900mA of current.

In my tests, most USB 3.0, 3.1, and 3.2 ports on Linux computers did not supply the required electrical current.

If your computer does not supply 900mA of charge while streaming data, you will need to use a powered hub with the proper specification.

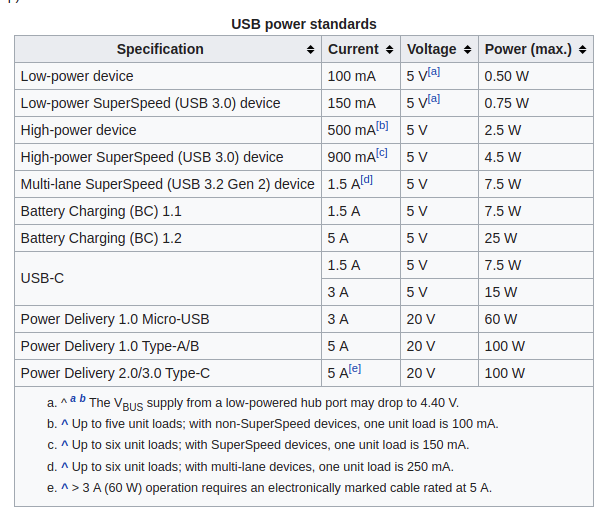

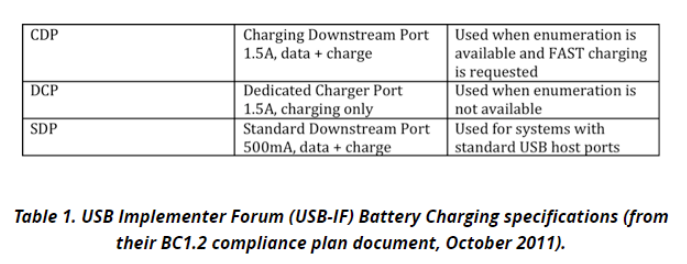

There are different standards for Battery Charging 1.2 for the USB electrical specification. You will need BC 1.2 CDP to provide 1.5A data plus charge. The THETA Z1 will only consume 0.9A of the 1.5A capacity.

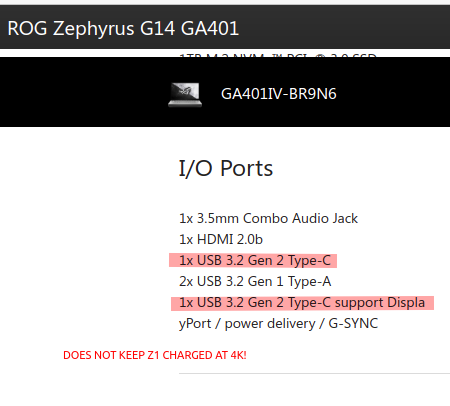

It’s likely that USB Type-C (not USB 3.0 with a USB-C connector) and USB PD can also deliver over 900mA, but I did not test these. Note that my Asus Zephyrus laptop has USB-C connector ports directly the laptop body, but these physical ports comply to the USB 3.2 specification, not USB-C. USB 3.2 does not require USB Power Delivery.

From the table below, it would appear that USB 3.2 Gen 2 would deliver the required electrical current. However, I wasn’t able to keep the Z1 charged indefinitely at 4K with my ROG Zephyrus G14 GA401.

Here’s the specifications on my laptop.

Long-term Streaming Platform Tests

| Platform | Result |

|---|---|

| Acer Predator 300 laptop with onboard USB 3.1 ports | success. battery charged while streaming |

| Jetson Nano with external powered USB hub with BC 1.2 | success. battery charged. |

| Jetson Nano using onboard USB 3 ports | fail. battery drained. |

| Desktop computer with Intel X99 Wellsburg motherboard and USB 3.1 ports | fail. battery drained |

| Asus Zephyrus laptop with USB 3.2 ports | fail. battery drained |

| desktop computer with Intel B85 motherboard and USB 3.0 ports | fail. battery drained |