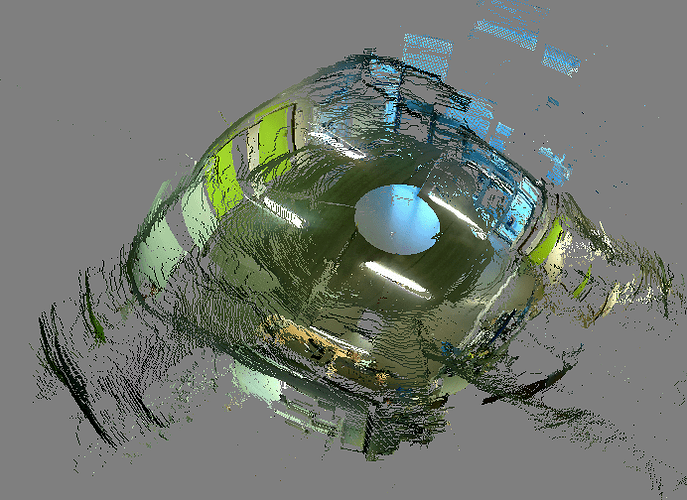

The reason we are interested in specifically using Ricoh cameras as stereo setup is to use the entire 360 view provided by the camera to estimate depth. This I can only achieve with omnidirectional cameras such as Ricoh. The below figure shows such a 3D reconstruction that we obtained from two ricoh theta S cameras.

We hope to improve the quality by using the higher resolution offered by THETA Z1 and also my properly synchronizing the images.

In the video you uploaded, the camera hardware has the facility to provide external trigger and obtain very good synchronziation (of the order of nanosecond). For Ricoh camera, I do not see any such hardware facility. But, the USB API you mentioned would help in synchronization. Since it is essentially a PTP cam we should be able to synchronize the clocks (of the order of microsecond). Right now, I have contacted another Engineer who is more familiar with the protocol. If I find a solution, I will surely share with the community.

Also, do you know if Ricoh cameras are global shutter or rolling shutter?