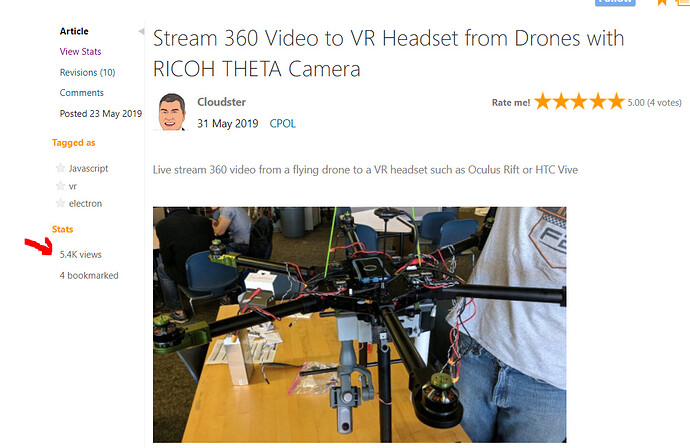

Just figured I would drop a little project update here letting people know about the senior design project I worked on that used a Ricoh Theta V. I know @codetricity and others on here would definitely be interested in it, since I have seen people talking about sending Ricoh Theta V video from a drone, but as far as I know noone has demonstrated anything yet.

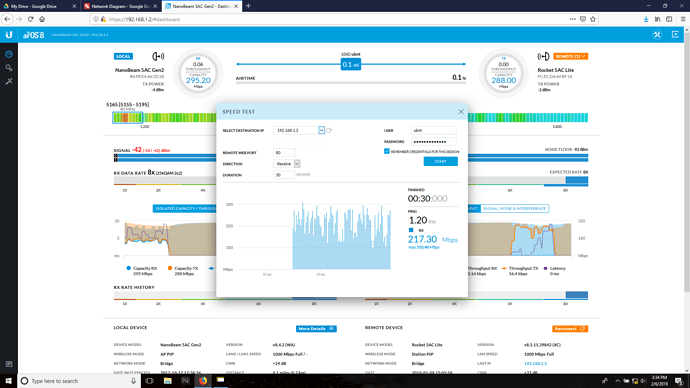

We built a system that would allow a ground operator to view a livestream video from a drone in their VR headset. The system will send the entire 360 degree video to the ground station where it is recorded and streamed live to the pilot wearing a VR headset. During testing we were able to get 1920x960 video streamed to our ground station a quarter mile away with less than 200ms of lag! The payload contains all the transmission and filming hardware, and can be mounted on to any drone easily (so long as it can lift a ~3kg payload).

Here are some demo videos. You will notice that FT1 is broken up. That is because in our excitement we forgot to keep the ground antenna pointed at the drone. But we were able to reconnect to it while it was in the air and continue streaming and recording:

System Overview

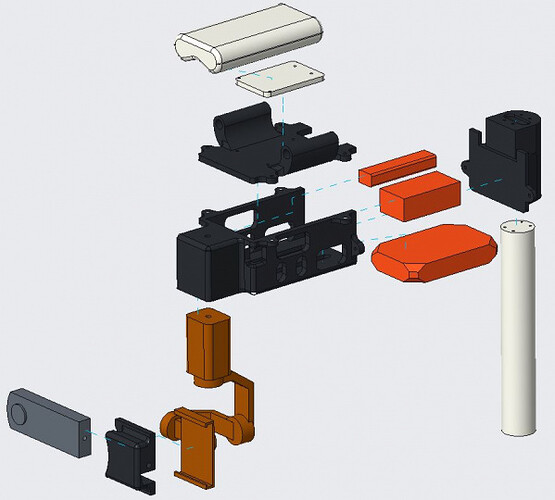

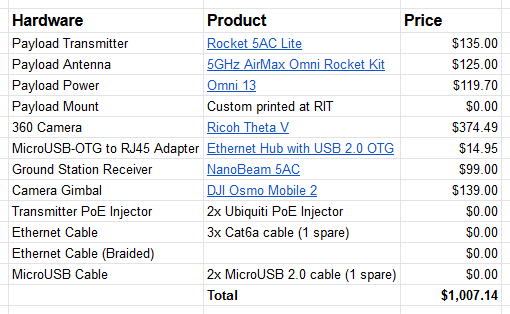

You can see a quick pic of the setup in this photo. The camera is mounted in a DJI Osmo Mobile 2 gimbal, which is then attached to our 3D printed payload. The white thing on the other side of the payload is the transmission antenna we used. The antenna is an Ubiquiti Omni antenna that is connected to an Ubiquiti Rocket 5AC Lite. An Omnicharge 13 (power for the hardware) and the PoE adapter for the Ubiquiti hardware are stuffed inside the gray payload.

We used Ubiquiti transmission hardware so we could transmit all the data we needed at long ranges. On the ground station we had an Ubiquiti NanoBeam 5AC that we would point at the drone to make sure we had good signal strength. These two pieces of hardware act as a point-to-point bridge that connects the ground station PC to the camera on a single network. We connected the Theta V to the Rocket 5AC using a USB-to-Ethernet adapter as has been mentioned on here before. We used a setup similar to the one shown here so that we could charge the camera while using it and have basically infinity recording time.

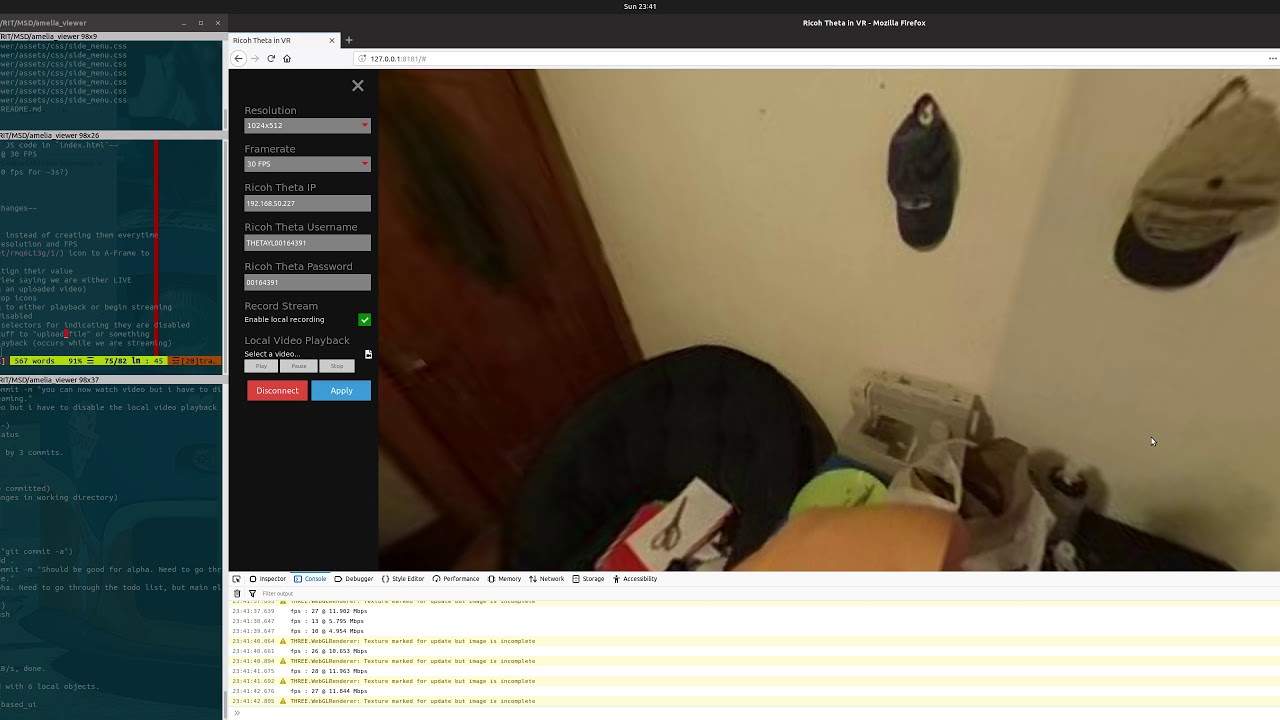

On the ground station PC we used some software I wrote that is freely available on GitHub.

Here is a demo video of the software. Please disregard my messy room ![]()

The software does the following:

- Communicate with the camera using the Ricoh API

- Stream up to 1920x960 live from the camera

- Record video while also displaying it

- Playback recorded videos

- Display live or pre-recorded videos in a VR headset (Oculus/Vive)

Future Work

TBH the drone platform I had to build is pretty rock solid and so is the transmission system, but obviously better transmission hardware could be purchased, and the payload’s weight could be decreased. The current gimbal may also be overkill. Most of the improvements that can be made involve the software.

The first big hiccup in the software is that while I built it originally to use Electron, it turns out the fork of Chromium Electron is based around did not support the OpenVR bindings the Oculus Rift when I wrote it (I still think it doesn’t). So even though you can run everything just in Electron, I ended up having to just host the UI using an NPM app that is started in the Electron app and then view the actual UI in Firefox, and then things worked. Unfortunately this is sort of a major hold up because some of the desktop-oriented features of Electron cannot be used in the browser. But when Electron supports the Oculus Rift I will port everything back in to the Electron app so you don’t have to open Firefox.

I also had to write this code in a very short amount of time (~2ish weeks) so I had to settle for the official Ricoh API, which is buggy and has limited options. For instance, I can’t stream in 4K at all, and my framerate for 1920x960 is limited to 8 FPS (bleh). I also am not a big fan of streaming using MJPEG, since my sketchy “player” doesn’t handle dropped frames well and and the format isn’t optimized for limited bandwidth conditions. Also, you will notice that for FT pt 2, the video is stuttery. That is because of a bug in the Ricoh API afaik, because you get the same weird stuttering in the official Ricoh preview app. But maybe their video player is just as bad as mine. So future work would involve writing a custom camera plugin that allows me to stream in 4K using H.265.

The UI is also less than stellar. When things occasionally fail, they fail silently. I currently just dump all my messages and warnings to the console’s log. I need to display pop up messages to let the user know what is going on. The video pumped in to the headset is also just the video from the camera. Ideally I would present the user with all sorts of telemetry data that helps them fly effectively and make sure they know what they are doing (drone battery percentage, heading, orientation, speed, range, etc). I use A-frame to get VR functionality, and it is relatively simple to add these kind of things to the video stream AFAIK. I would also like to “auto orient” the camera’s view using the camera’s accelerometer. Right now you have to manually specify the orientation of the video, or else the video’s orientation will correspond to the orientation of the camera (which in our case is sideways lol).

Conclusion

Overall the project was a great success though! I can’t tell you how cool it was to fly this thing using the VR headset. It really felt like the future. There are no systems that do what we do (at least that have been publicly demonstrated). Some companies try to get a similar effect by using a gimbal synced up to a headset, but they aren’t actually streaming and recording the entire 360 video, just a single FOV. This technology would be massively useful for search and rescue operations because multiple people can be viewing the feed at the same time. It would also be great for surveying and surveillance. There were literally no other cameras that could’ve done this project. It was only possible because the Theta has a dev community and all this documentation. The only other camera on the market I could even find capable of streaming live video at all was a Garmin Virb, but that only did a single hemisphere and there was no proof-of-concept code or footage showing it streaming live video over a network.

Unfortunately I had to hand over all the hardware at the end of the semester though so I don’t have any of the hardware anymore and won’t be able to write the new plugin. Maybe after I start working in October and have some cash I will pick up a Theta V though. In the meantime I will probably work on including telemetry in the viewer.

I really think this is the future of drone flight, and I am really happy I found a cool community like this that had all the info and resources I needed to make this project a success ![]()

Enjoy this video showing a recording we made in 4K. You can view the video in 360 on YouTube. This kind of video quality is the goal. With some more work I believe it is possible.

If you have any questions hit me up! I would be more than happy to answer any questions about the setup!